Two weeks ago our team Gless AI placed 2nd out of 350+ teams at the international Agentic Legal RAG Challenge 2026 and took home $8,000. We were supposed to fly to Dubai for the Machines Can See conference at Dubai AI Week to present in person, but couldn't make it due to the situation — so here are the takeaways instead.

What the competition was

The task sounds simple: answer 900 legal questions over a corpus of 300 PDFs — court decisions, laws, regulations from the DIFC (Dubai International Financial Centre) — with exact source pages cited as grounding.

In practice, the scoring formula was the harshest we've seen in any RAG benchmark:

- Scoring was multiplicative — weak grounding (the pages you cite as sources) crushed the entire result no matter how good the answers were.

- Some questions were traps: they referenced cases or laws that don't exist in the corpus, and the correct answer was "nothing found." A confident hallucination cost multiple scoring components at once.

- Latency mattered: slow pipelines were penalized by a separate multiplier.

In other words, the benchmark was specifically built to reward production-grade systems. A "beautifully over-engineered slow pipeline" and a "fast but ungrounded bot" both lost.

Final results

The top-5 teams finished within six points of each other:

- RAGnarok — 77.9

- Gless AI — 76.7

- CPBD — 76.0

- Cohomology — 72.0

- Dmitry Ulybin — 71.9

Full leaderboard at agentic-challenge.ai/leaderboard.

Why this matters for business

Most RAG systems we see in production are optimized for a single metric — "does it answer or not." That's not enough. In legal, medical, or financial work, every citation has to be verifiable, and a confident wrong answer is worse than "I didn't find it."

That's exactly what this competition tested: you couldn't win with a slow over-engineered pipeline, and you couldn't win with a fast but ungrounded one. So 2nd place out of 350+ teams matters to us more than the prize money — it's concrete proof that we can build RAG that holds up to production load and to clients who check the citations.

Takeaways

A few short lessons that apply to any RAG project, not just legal:

- Grounding isn't a feature — it's the core of the system. If a user can't click through to the source page, RAG in serious domains is useless.

- A simple pipeline with the right details beats "smart" agents. On the final phase, our 600 lines of Python beat a SOTA agent that ran on the same task for 1.5 hours.

- Measure answer quality, grounding, and latency together. Optimizing for one of them lies — a pipeline that answers beautifully but is slow or ungrounded won't survive in production.

Technical details

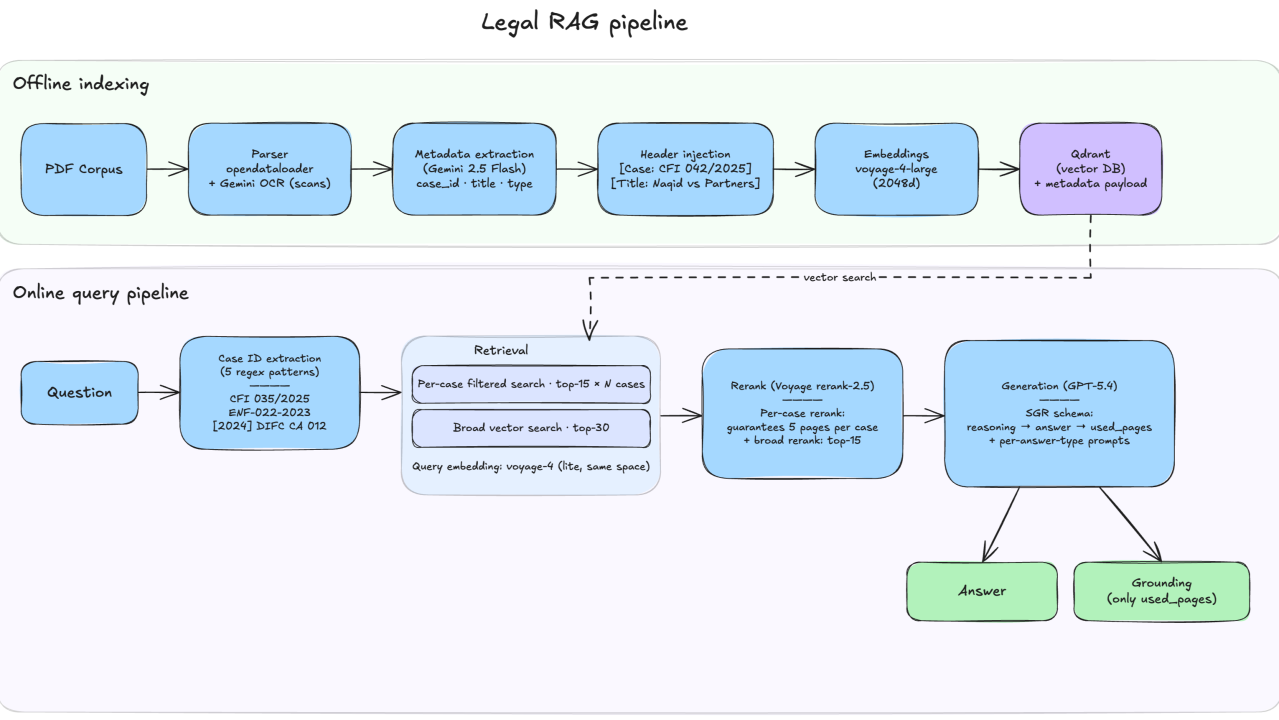

If you want the full technical breakdown — parsing, embeddings, retrieval, reranking, structured output, what we tried and dropped — we wrote a detailed technical write-up on LinkedIn.

If you're building a RAG system where the cost of a wrong answer is your reputation or regulatory exposure, get in touch — we'll help you design a pipeline where citations actually work and users trust the answers.